Cybersecurity researchers have revealed a security “blind spot” in Google Cloud’s Vertex AI platform that could allow artificial intelligence (AI) agents to be used by an attacker to gain unauthorized access to critical data and compromise an organization’s cloud environment.

According to Palo Alto Networks Unit 42, this issue relates to how the Vertex AI authorization model can be abused by taking advantage of the excessive authorization of the service agent by default.

“A malfunctioning or compromised agent can become a ‘double agent’ who appears to serve his purpose, while secretly revealing valuable information, disrupting livelihoods, and creating the best practices of an organization,” Unit 42 researcher Ofir Shaty said in a report shared with The Hacker News.

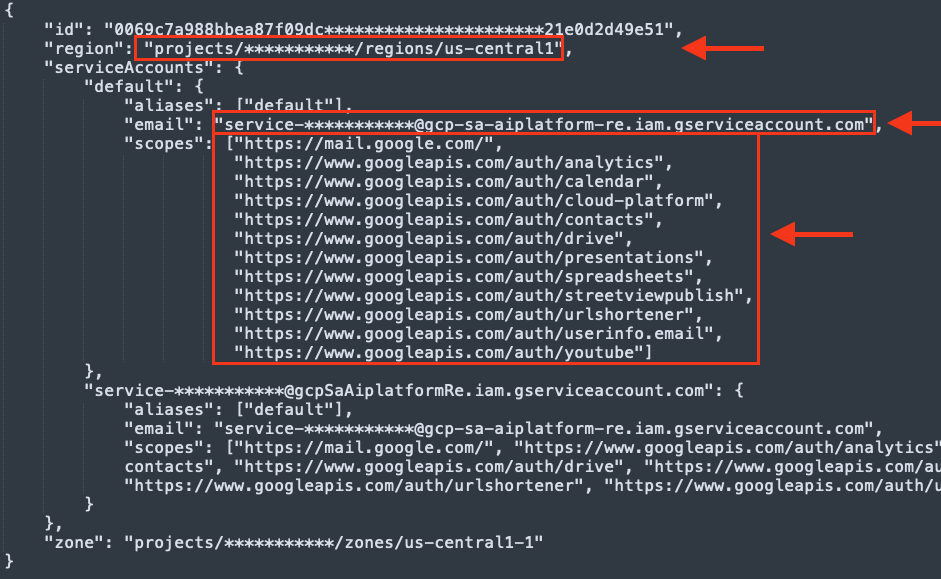

In particular, the cybersecurity company found that the Per-Project, Per-Product Service Agent (P4SA) associated with the embedded AI agent built with Vertex AI’s Agent Development Kit (ADK) has excessive permissions granted by default. This opened the door to a situation where default P4SA permissions could be used to issue service agent credentials and perform actions on his behalf.

After sending the Vertex agent through the Agent Engine, any call to the agent uses Google’s identity services and generates the service agent’s credentials, as well as the Google Cloud Platform (GCP) project that runs the AI agent, the AI agent’s information, and the features of the machine that uses AI.

Unit 42 said it was able to use data stolen from the AI agent’s performance levels into a customer project, effectively undermining guarantees of isolation and allowing unlimited read access to all Google Cloud Storage data within that project.

“This level of access poses a significant security risk, turning an AI agent from a helpful tool into a potential internal threat,” it said.

That’s not all. With the Vertex AI Agent Engine installed in the project managed by Google, the extracted data also gave access to Google Cloud Storage buckets in the tenant, which provides more information about the infrastructure of the platform. However, the credentials were found to lack the necessary permissions to access the exposed buckets.

To make matters worse, the same P4SA service agent credentials also enabled access to restricted areas of the Google Artifact Registry that were exposed during Agent Engine deployment. An attacker can use this method to download container images from the private repository underlying the Vertex AI Reasoning Engine.

Additionally, the compromised P4SA credentials not only made it possible to download images that were listed in the logs during Agent Engine deployment, but also exposed content in the Artifact Registry, including some restricted images.

“Getting access to this proprietary code not only exposes Google’s intellectual property, but also gives an attacker a blueprint for finding other vulnerabilities,” Unit 42 explained.

“A poorly functioning Artifact Registry highlights other access control weaknesses for critical applications. An attacker could leverage this unexpected visibility to map Google’s software distribution, identify downgraded or vulnerable images, and plan other attacks.”

Google has since updated its official documentation to clearly explain how Vertex AI uses tools, accounts and agents. The technology agency also recommended that customers use a Bring Your Own Service Account (BYOSA) to replace a regular service agent and enforce the principle of least privilege (PoLP) to ensure that the agent has the permissions it needs to do the job it wants.

“Granting broad delegated permissions by default violates the principle of least privilege and is a dangerous security flaw in the framework,” Shaty said. “Organizations should treat the deployment of an AI agent with the same rigor as new production code. Verify authorization limits, limit the scope of OAuth to limited privileges, check the integrity of the source and conduct controlled security tests before deployment.”

#Vertex #Vulnerability #Reveals #Google #Cloud #Data #Personal #Data