There’s been another shift away from complexity in web development this year, with front-end frameworks like Astro and Svelte gaining popularity as more developers look for solutions outside of the React ecosystem. Meanwhile, the site’s platform components have shown that they are on the way to building advanced web applications – CSS is getting much better than 2025.

All that said, perhaps the biggest trend in web development this year has been the rise of AI-assisted coding — which, it turns out, has a tendency to fail in React and React’s main framework, Next.js. Because React controls the front-end, major language models (LLMs) have had plenty of React code to practice with.

Let’s take a closer look at the five biggest web development trends of 2025.

1. Distribution of Site Features

Over 2025, several parts of the website were quietly tied to the functionality offered by JavaScript frameworks. For example, the View Transition API – which enables your website to seamlessly transition between pages – was part of the Baseline 2025 browser support index. So it is now widely available for web developers to use.

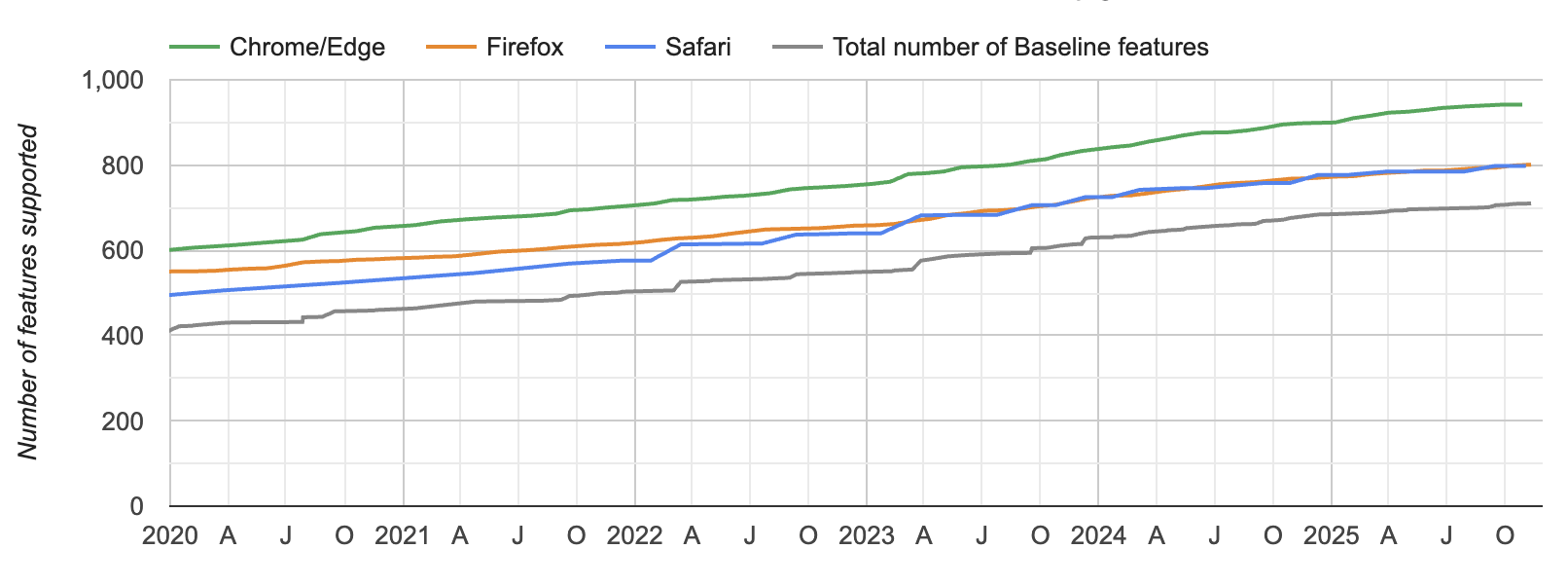

Baseline is a project coordinated by the WebDX Community Group at W3C, which includes representatives from Google, Mozilla, Microsoft and other organizations. It has been active since 2023, but this year it established itself as a useful tool for practicing web developers.

Consistent annual growth of Baseline components, by Web Platform Status site.

As The New Stack’s Mary Branscombe reported in June, there are several ways to keep track of what becomes part of the foundation:

Google’s Web.Dev has monthly updates on Baseline features and content, the “WebDX features explorer” helps you see which features are Low Availability, High Availability or High Availability; and monthly release notes summarize which features have reached a new status.

From a web performance point of view, there is no other reason not to use web features. As Jeremy Keith, a long-time web developer, said recently, architecture “limits the possibilities of what you can do in today’s web browsers.” In the following post, Keith recommended that developers stop using React in the browser as much because of the cost of file size to the user. Instead, he encouraged devs to “explore what you can do with vanilla JavaScript in the browser.”

2. AI Coding Assistants Default to React

This year, AI became a common part of web development tools (although it is not always welcomed by developers, especially those who meet Mastodon or Bluesky on site X or LinkedIn). Whether you are a fan of AI in application development or not, there is one big concern: the tendency of LLMs to move towards React and Next.js.

When OpenAI’s GPT-5 was released in August, one of its touted strengths was scripting. GPT-5 initially received very mixed reviews from developers, so at the time, I reached out to OpenAI to ask them about the coding features. Ishaan Singal, a researcher at OpenAI, responded via email.

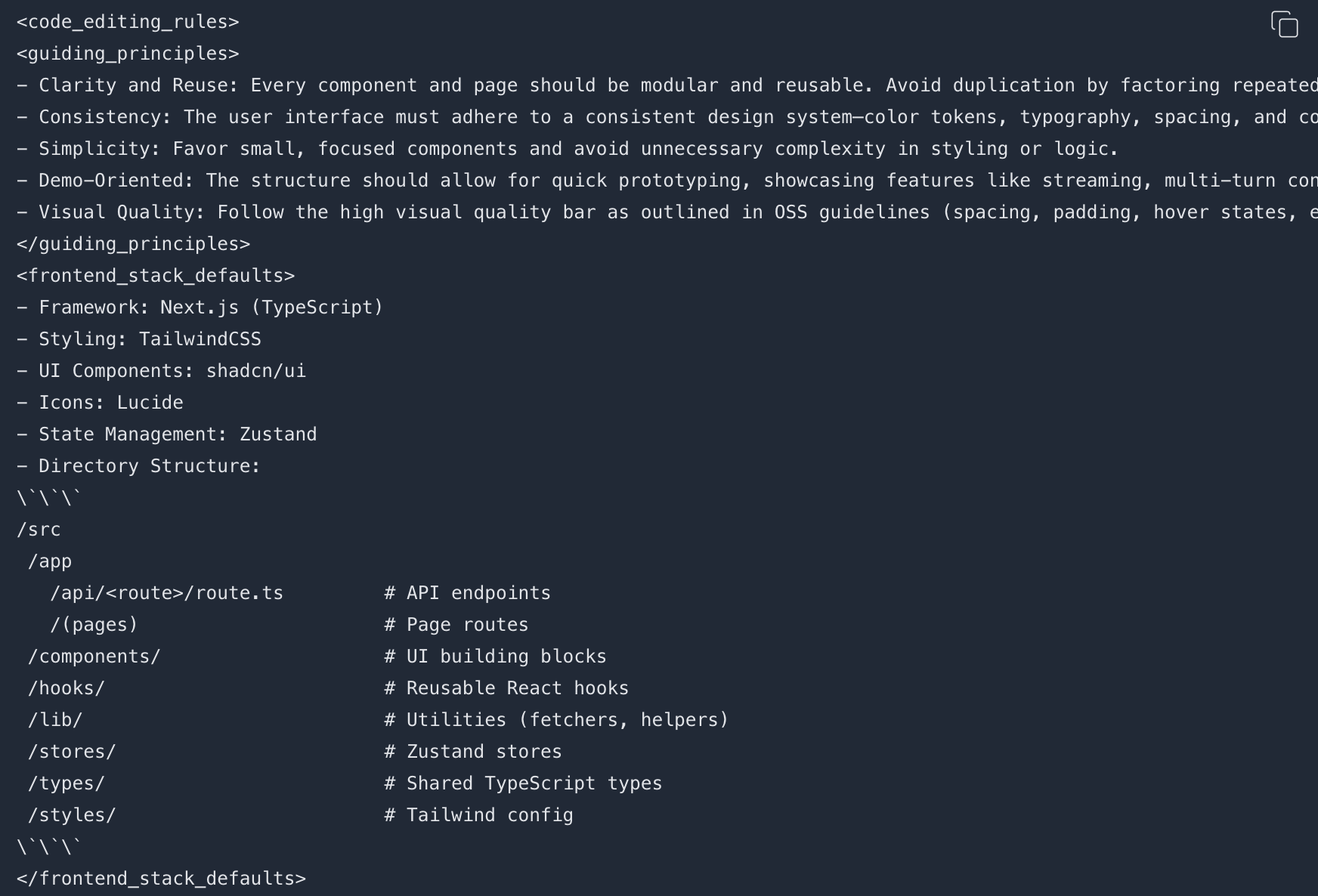

I noticed from Singal that in the GPT-5 prompting guide, there are three recommended methods: Next.js (TypeScript), React and HTML. Has there been any collaboration with the Next.js and React project teams, I asked, to develop GPT-5 for those frameworks?

“We chose these methods based on their popularity and familiarity, but we did not directly interact with Next.js or React teams in GPT-5,” he answered.

An example of “editing code sorting rules for GPT-5” from OpenAI’s GPT-5 guide.

We know that Vercel, the company behind the Next.js framework, favors GPT-5. On launch day, it called GPT-5 “the best example of AI.” So there’s a nice quid pro quo going on here – GPT-5 was able to become an expert on Next.js because of its popularity, which probably increases its popularity even more. That helps both OpenAI and Vercel.

“At the end of the day, it’s a developer’s choice,” Singal concluded, about which web technology a dev wants to use. But established repos have better support from the community. This helps the producers to take care of themselves.

3. Emergence of Internet Tools in AI Agents and Chatbots

This year, we have seen the emergence of mini-web applications within AI chatbots and agents.

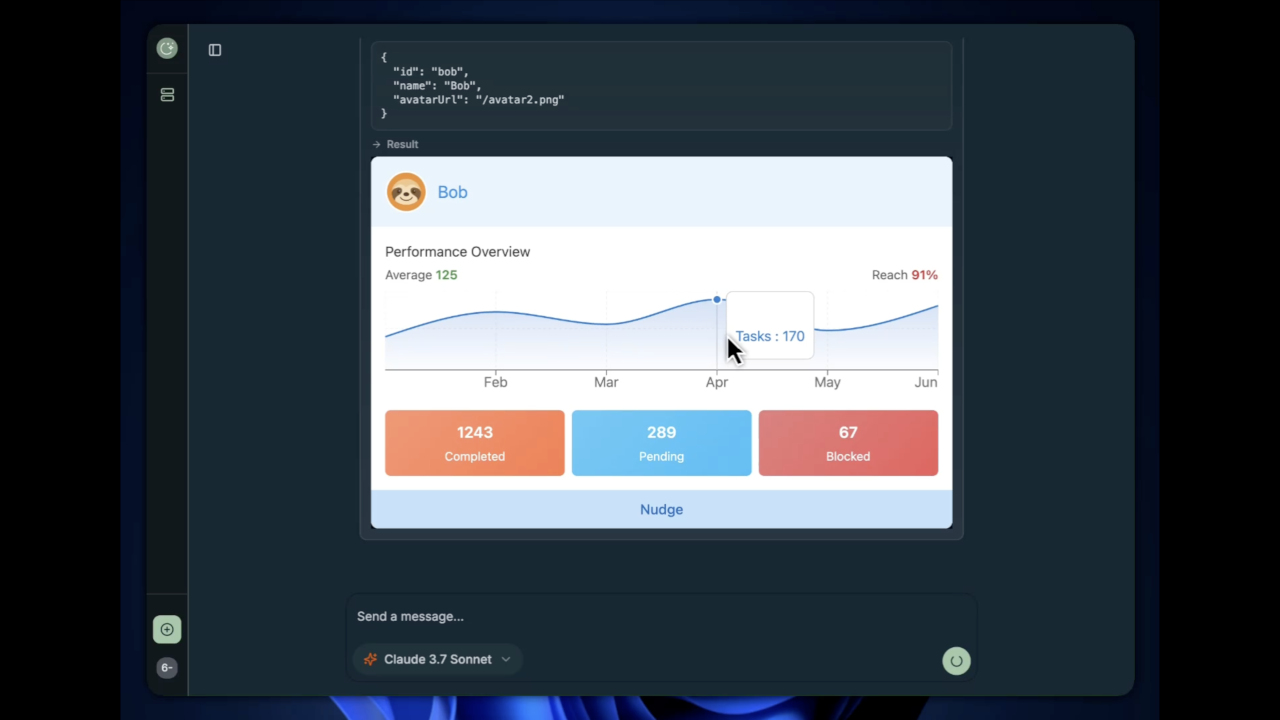

MCP-UI was the first sign that the web would become a central part of AI agents. As the name suggests, MCP-UI uses the well-known Model Context Protocol as the basis of communication. The project “aims to validate how models and applications can request the display of rich HTML interfaces within a client application.”

In an interview in August, the two founders (one of whom worked at Shopify at the time) explained that there are two types of SDKs for MCP-UI: a client SDK and a server SDK to connect to MCP servers. The server SDK is available in TypeScript, Ruby and Python.

The MCP-UI display of the UI is added to the Claude 3.7 Sonnet dialog.

MCP-UI sounded promising, but was quickly overshadowed by OpenAI’s Apps SDK, which launched in early October. The apps SDK allows third-party developers to create web-based apps that work as interactive parts of ChatGPT chats – reminding many of us when Apple launched its App Store in 2008.

A defining feature of the Tools SDK is its web-based UI (similar to MCP-UI). The ChatGPT app component is a web UI that uses a sandboxed iframe within the ChatGPT chat. ChatGPT acts as an app host. You can think of a third-party ChatGPT app as a “small web app” embedded directly into the ChatGPT interface.

By the end of October, industry heavyweights like Vercel had learned how to use their JavaScript frameworks to build ChatGPT applications. Vercel’s fast integration of Next.js and the ChatGPT application platform shows that AI chatbots will not be limited to interactive widgets – advanced web applications will live on this platform, too.

4. Web AI and On-Device Inference in the Browser

A similar development over 2025 has been the rise of client-side AI deployment in the browser, allowing LLM concepts to take place on the device. Google was particularly prominent in this approach; and its term for it is “Web AI.” Jason Mayes, who leads these efforts at Google, defines Web AI as “the ability to apply any type of machine learning or client-side services to a user’s device through a web browser.”

In November, Google hosted an invitation-only event called the Google Web AI Summit. Afterwards, I spoke with Mayes, the event’s organizer and MC. He explained that the key technology is LiteRT.js, Google’s Web AI runtime focused on web productivity applications. It builds on LiteRT, which is designed to run machine learning (ML) models directly on devices (mobile, embedded, or edge) instead of relying on cloud computing.

In a keynote speech at the Web AI Conference, Parisa Tabriz, vice president and general manager of Chrome and the web environment at Google, highlighted the AI APIs built into Chrome last August, as well as the release of Gemini Nano – the main model of Google’s device – as a feature built into Chrome last June. These and other web technologies drive the current Web AI trend.

Parisa Tabriz and Web AI Summit.

Another innovation that Google was involved in, along with Microsoft, was the release of WebMCP, which allows devs to control how AI agents interact with websites using client-side JavaScript. In a September interview with Kyle Pflug, group product manager for the web platform at Microsoft Edge, he explained that “the main idea is to allow web developers to define the ‘tools’ of their website in JavaScript, similar to the tools that would be provided by a traditional MCP server.”

Web AI is not only promoted by commercial companies. The World Wide Web Consortium (W3C) is exploring the building blocks of the “web agent,” which includes using MCP-UI, WebMCP and an emerging standard called NLWeb (developed at Microsoft).

5. The ‘Vite-ification’ of the JavaScript Ecosystem

It might feel like AI is taking over web development this year – and it really has. But the front end has also seen its share of innovation. One product in particular stood out.

Vite, created by Evan You, is now a tool for building modern front-end frameworks, including Vue, SvelteKit, Astro and React – with experimental support also from Remix and Angular. In an interview with The New Stack in September, You told me that the key to Vite’s success was its early use of ES Modules (ESM), a standalone JavaScript system that allows you to “break up JavaScript code into different pieces, different modules that you can load.”

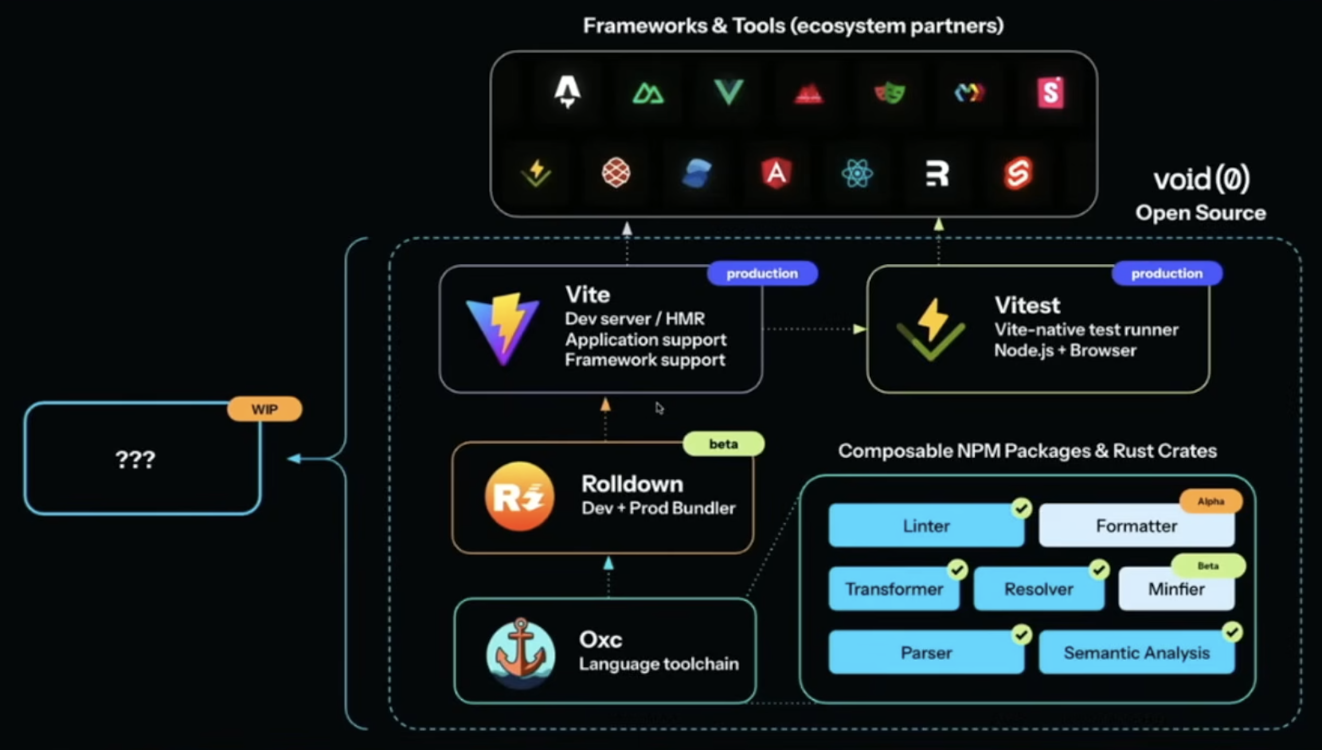

Vite ecosystem by Even You at ViteConf.

You and his company, VoidZero, are now building Vite+, a new integrated JavaScript tool that aims to solve the JavaScript divide. At this year’s ViteConf event, you officially unveiled Vite+ and positioned it as a business development tool. He said it includes “everything you love about Vite — and everything you’ve done together.”

Crossroads for Web Development

By the end of 2025, it feels like we’re at a crossroads. On the other hand, there is a way out of the React complexity conundrum: Use site components and tools like Astro that ease the burden on users. While that was the norm this year, it is at risk of being overshadowed by 2026 by our increasing reliance on AI tools for coding – which, as mentioned, tend to rely on React.

The truth is, many developers now – including hundreds of thousands of “vibe coders” who were previously not part of the development ecosystem – will continue to be fed React code by AI systems. That makes it even more important for the web development community to continue to promote and advocate for website code in the coming year.

YOUTUBE.COM/THENEWSTACK

Technology is moving fast, don’t miss an episode. Subscribe to our YouTube channel to stream all our podcasts, interviews, demos, and more.

SUBSCRIBE

#Internet #Development #AIs #React #Bias #Native #Web