Anthropic on Tuesday confirmed that the internal code for its popular artificial intelligence (AI) assistant, Claude Code, was accidentally released due to human error.

“No sensitive customer data or information was affected or exposed,” an Anthropic spokesperson said in a statement shared with CNBC News. “This was an issue with the release packages caused by human error, not a security breach. We are taking steps to prevent this from happening again.”

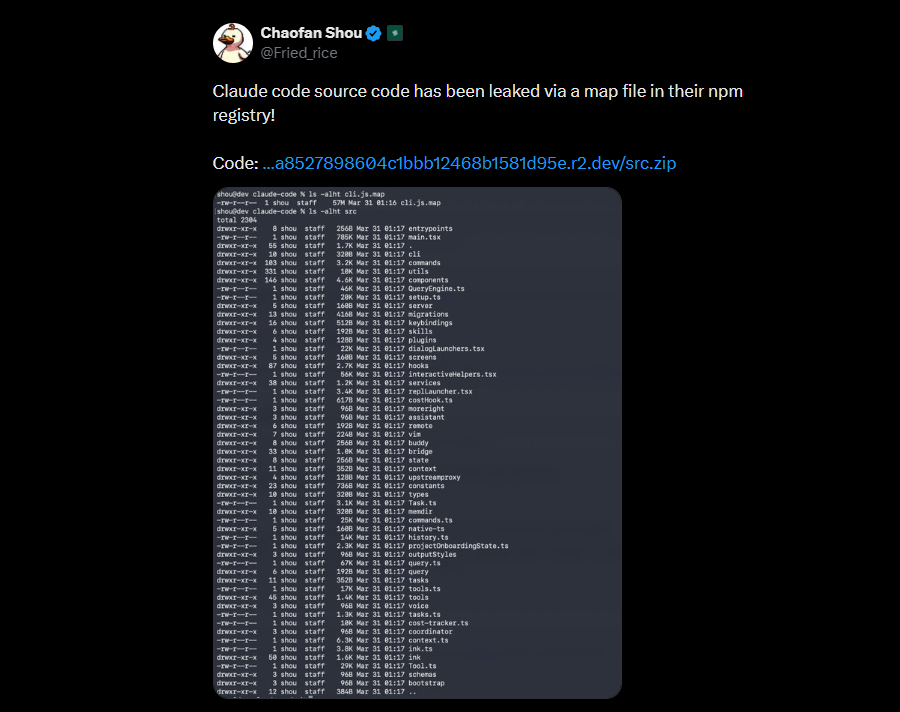

The discovery came after the new AI released version 2.1.88 of the Claude Code npm package, when users saw that it contained a source map file that could be used to access the Claude Code source code – which contains about 2,000 TypeScript files and more than 512,000 lines of code. This version is no longer available for download from npm.

Security researcher Chaofan Shou was the first to publicly flag it on X, saying “Claude’s source code has been exposed in a map file in their npm registry!” X’s post has since garnered over 28.8 million views. The leaked codebase is still available via the public GitHub repository, where it has more than 84,000 stars and 82,000 forks.

This type of code leak is important, as it gives software developers and Anthropic’s competitors a blueprint of how the popular encryption tool works. The coders published details of its self-healing architecture to overcome the problems of the static window, as well as other internal features.

These include a tool system to run different capabilities such as reading files or executing bash, a query engine to handle LLM API calls and orchestration, a multi-agent engine to generate “sub-agents” or teams to perform complex tasks, and a two-way communication interface that integrates IDE extensions into the Claude Code CLI.

The leak also shed light on a feature called KAIROS that allows Claude Code to act as a persistent, background agent capable of periodically correcting errors or performing tasks automatically without waiting for human input, and even sending notifications to users. Complementing this process is a new “dream” mode that will allow Claude to constantly think in the background to develop ideas and repeat existing ones.

Perhaps the most interesting feature is the app’s “Undercover Mode” for making “stealth” contributions to open source repositories. “You are working UNDERCOVER in a PUBLIC/OPEN-SOURCE repository. Messages of your commitment, PR titles, and PR organizations MUST NOT contain any Anthropic-internal information. Do not advocate your cover,” read the administration’s message.

Another interesting finding is Anthropic’s attempts to combat stealth-type attacks. The system has controls that insert fake device descriptions into API requests to poison the training data if competitors try to override the Claude Code results.

Typosquat npm Packages Pushed to Registry

Since the internal operators of Claude Code have been exposed, the development risks give bad shots to unscrupulous actors and manipulate the system to perform unintended actions, such as running malicious commands or displaying data.

“In the area of strong jailbreaks and rapid injections, attackers are now able to learn and master the flow of data through Claude Code’s four-level control pipeline and payloads designed to survive hard, continuously for long periods of time,” security company AI Straiker said.

Even more worrying is the fallout from the Axios distribution attack, as users who installed or updated Claude Code via npm on March 31, 2026, between 00:21 and 03:29 UTC may have launched a trojanized version of the HTTP client with a remote access trojan. Users are advised to immediately downgrade to safe mode and change all passwords.

In addition, attackers are already capitalizing on typosquat leaks within npm package names to try to target people who might try to compile the Claude Code source code and platform confusion attacks. The package names, all posted by a user named “pacifier136,” are listed below –

- audio-capture-napi

- color-diff-napi

- image-processor-napi

- revolutionaries

- url-handler-napi

“At the moment they are empty stubs (`module.exports = {}`), but this is how these attacks work – squat the name, wait for it to download, then push a bad update that hits everyone who installed it,” security researcher Clément Dumas said in a post on X.

This incident is the second major mistake for Anthropic during the week. Details about the company’s upcoming AI version, along with other internal details, were leaked via the company’s content management system (CMS) last week. Anthropic later acknowledged that it was testing the model with first-access customers, saying it “has the greatest potential we’ve built so far,” according to Fortune.

#Claude #Source #Code #Leaked #Npm #Packaging #Error #Anthropic #Confirms