OpenAI has disclosed two significant security vulnerabilities affecting its widely used artificial intelligence platforms, ChatGPT and Codex, following responsible disclosures from cybersecurity researchers. While there is no evidence that any of the vulnerabilities have been exploited in real-world attacks, experts say the incidents highlight the risks to the system as AI systems evolve into fully computerized environments.

An Encrypted Data Sharing Program Found in ChatGPT

The first vulnerability, identified by Check Point researchers, exposed a new way to silently extract user data from ChatGPT programs without the user’s knowledge.

According to me Check Point Reportinvaders could craft a negative feedback capable of turning ordinary interaction into a hidden channel of exaggeration. This method allowed access to:

- User messages

- Attached documents

- Potential risk factors

The attack bypassed ChatGPT’s built-in defenses by exploiting a previously unknown vulnerability. a separate channel within its Linux-based operating environment.

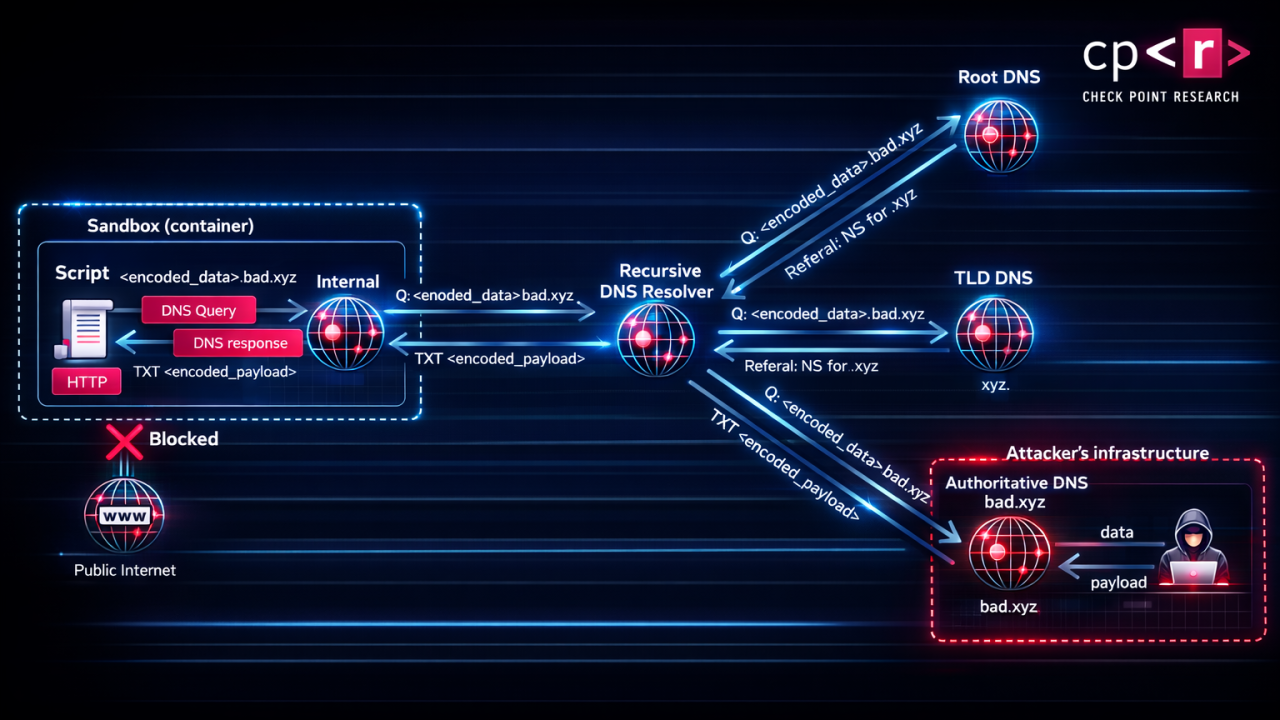

Instead of relying on standard web applications—which are often restricted—the process added An anonymous communication system based on DNS. By encrypting information in DNS queries, attackers can transmit information without generating security warnings or requiring the user’s permission.

The researchers emphasized that this behavior was always invisible: The system assumed that the execution environment was excluded, meaning that it did not interpret the task as a transfer of external data.

From Rapid Vaccine to Chronic Threat

Vulnerability has also increased the risk posed by Common GPTswhich allows users to create suitable AI agents.

Instead of convincing users to click on malicious messages, attackers can inject malicious thought directly into these custom settings – changing them. persistent attack vectors.

In practical terms, a user can be tricked with a seemingly benign instruction, such as:

- Unlocking hidden features

- Improving performance

- Access to premium capabilities

Behind the scenes, however, the AI can start extracting data through an encrypted DNS channel.

Patch Time and Risk Assessment

OpenAI resolved the issue on February 20, 2026, after a responsible announcement. The company said there was no indication of an active exploit, although the nature of the vulnerability raised concerns about the limits of detection.

Such vulnerabilities create “blind spots” in AI systems, where neither users nor platforms can easily detect misuse.

Broader Implications for Company AI Adoption

The findings come at a time when AI tools like ChatGPT are increasingly being incorporated into business processes, often dealing with:

- Proprietary business information

- Customer information

- Internal communications

It is recommended that organizations implement ordered security structuresincluding:

- Automated monitoring of AI transactions

- Rapid vaccination coverage

- Data loss prevention (DLP) controls.

- Isolation of sensitive work systems

The Rise of “Poaching” with Browser Extensions

The announcement also coincides with a growing trend identified by security researchers: harmful or damaged extensions capable of hacking chatbot conversations.

Such tools may silently collect:

- Evidence points

- Intellectual property

- Personal information

These risks extend beyond individuals to organizations, where compromised results can expose entire datasets or internal systems.

Second Mistake: Codex Vulnerability Enabled GitHub Token Theft

In a separate but equally profound study, BeyondTrust researchers discovered a order vaccine weakness on OpenAI’s Codex platform.

The flaw allowed attackers to take control of the system by GitHub branch name parameterwhich enables the execution of arbitrary commands within the Codex cloud environment.

How the Attack Worked

The accident was caused by Inadequate sanitization with backend API requests. By creating a malicious branch name, attackers can:

- Add instructions to the Codex workflow

- Manage multiple workloads in a containerized environment

- Extract important credentials

Most importantly, attackers can steal:

- GitHub User Access Tokens

- GitHub installation tokens

These tokens offer a wide range of permissions, including read/write access to archives.

Using Developer Workflows

The attack can be caused by the normal operation of the developers. For example:

- A dangerous branch is created

- Codex pull request references (eg via @codex)

- Codex automatically checks the code

- Entered income fulfills and generates information

This creates a scalable attack vectorespecially in communal or open spaces.

Patch and Width of Influence

OpenAI has fixed the Codex vulnerability February 5, 2026after its publication in December 2025.

The systems involved include:

- ChatGPT web interface

- Codex CLI

- Codex SDK

- Codex IDE integration

AI Agents as a New Area of Attack

Both weaknesses point to a deeper issue: AI agents become lucky agents between users and critical systems.

Unlike traditional software, these agents:

- Execute code dynamically

- Connect with external services

- Operate with high levels of reliability and automation

This makes them attractive targets for would-be attackers lateral entry into commercial areas.

Industrial Wake-Up Call

Taken together, the findings confirm a broader shift in cybersecurity thinking. AI systems are no longer just tools – they are active participants in computer environmentswith access to critical data and operational capabilities.

Key lessons from these events include:

- AI Guardrails can be bypassed in indirect ways

- Input validation is always important—even in AI-driven applications

- Trusted forums (eg, GitHub) can become attack vectors

- The visibility of AI behavior is still limited

Go Ahead

While OpenAI’s quick response prevented known abuses, these incidents highlight the need for a secure, security-first approach to AI deployment.

Organizations adopting AI technologies are now encouraged to:

- Treat AI systems as part of their primary attack surface

- Implement zero-trust principles for AI transactions

- Continue to explore the integrated AI functionality

As AI continues to integrate itself into software development, business operations, and everyday life, this vulnerability serves as an early warning: the future of cybersecurity cannot be separated from the security of AI itself.

#OpenAI #Fixes #Critical #ChatGPT #Data #Leak #Codex #GitHub #Token #Vulnerability #Raising #Broader #Security #Concerns